We fine-tune 7 models including ViTs, DINO, CLIP, ConvNeXt, ResNet, on

GitHub - rwightman/timm: PyTorch image models, scripts, pretrained weights -- ResNet, ResNeXT, EfficientNet, EfficientNetV2, NFNet, Vision Transformer, MixNet, MobileNet-V3/V2, RegNet, DPN, CSPNet, and more

NeurIPS 2023

Ananya Kumar's research works Stanford University, CA (SU) and other places

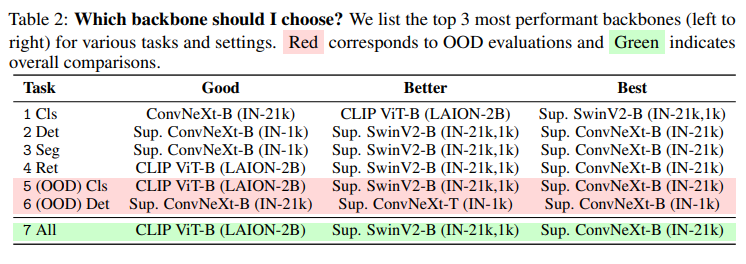

The Computer Vision's Battleground: Choose Your Champion, by Salvatore Raieli

Vision Transformer (ViT)

Review — ConvNeXt: A ConvNet for the 2020s, by Sik-Ho Tsang

GitHub - huggingface/pytorch-image-models: PyTorch image models, scripts, pretrained weights -- ResNet, ResNeXT, EfficientNet, NFNet, Vision Transformer (ViT), MobileNet-V3/V2, RegNet, DPN, CSPNet, Swin Transformer, MaxViT, CoAtNet, ConvNeXt, and more

The freeze out distribution, f f ree (x, p), in the Rest Frame of the

2304.12210] A Cookbook of Self-Supervised Learning

Merve Noyan on LinkedIn: Can't emphasize this enough but your donations can save lives of many…

Masked Autoencoders Are Scalable Vision Learners

GitHub - leondgarse/keras_cv_attention_models: Keras beit,caformer,CMT,CoAtNet,convnext,davit,dino,efficientdet,edgenext,efficientformer,efficientnet,eva,fasternet,fastervit,fastvit,flexivit,gcvit,ghostnet,gpvit,hornet,hiera,iformer,inceptionnext,lcnet