Fitting AI models in your pocket with quantization - Stack Overflow

How to improve my knowledge and skills in artificial intelligence - Quora

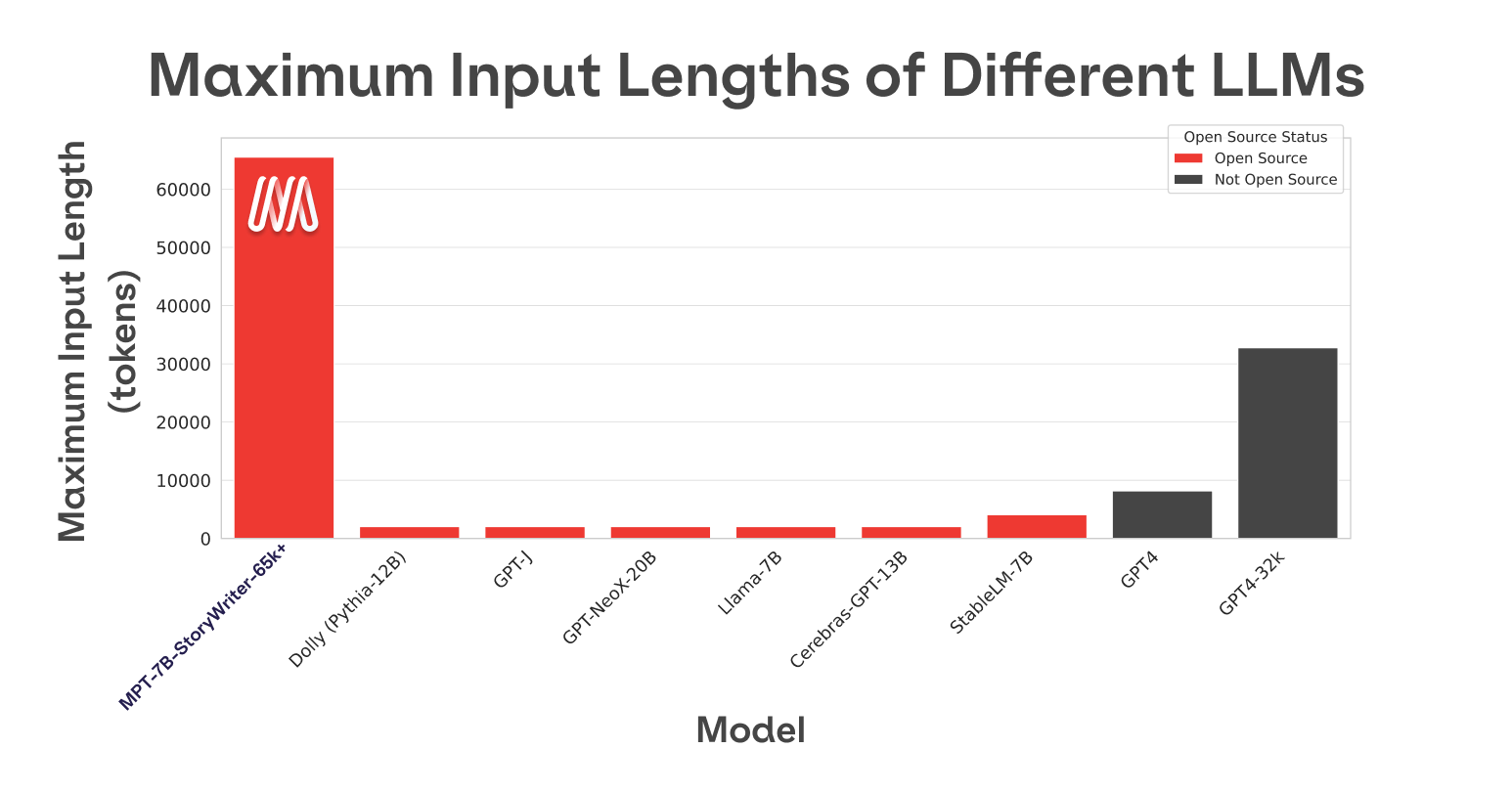

MPT-7B and The Beginning of Context=Infinity — with Jonathan

Partner Content - Stack Overflow

neural network - Does static quantization enable the model to feed a layer with the output of the previous one, without converting to fp (and back to int)? - Stack Overflow

2024 Outlook for Language Models

Understanding Quantization: Optimizing AI Models for Efficiency

Andrew Jinguji on LinkedIn: Fitting AI models in your pocket with quantization

python - convert .pb model into quantized tflite model - Stack

The NLP Cypher, 02.28.21. Zeroshot, by Ricky Costa

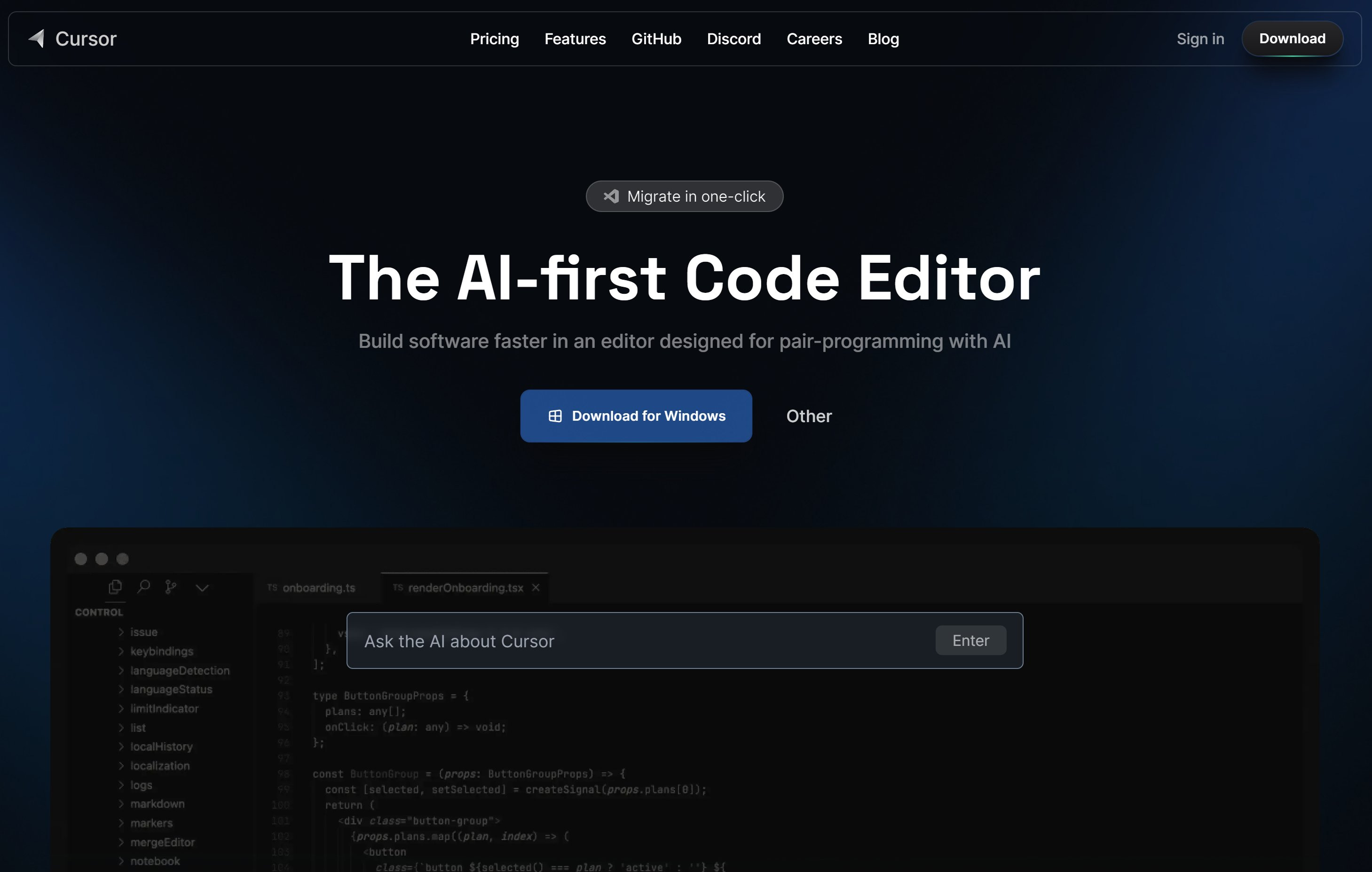

Cursor.so: The AI-first Code Editor — with Aman Sanger of Anysphere

Introduction to AI Model Quantization Formats, by Gen. David L.

LipingY – Page 11 – Deep Learning Garden

Quantization — The way of using ML model in Edge Devices.

LipingY – Page 11 – Deep Learning Garden