Fine-Tuning LLMs With Retrieval Augmented Generation (RAG)

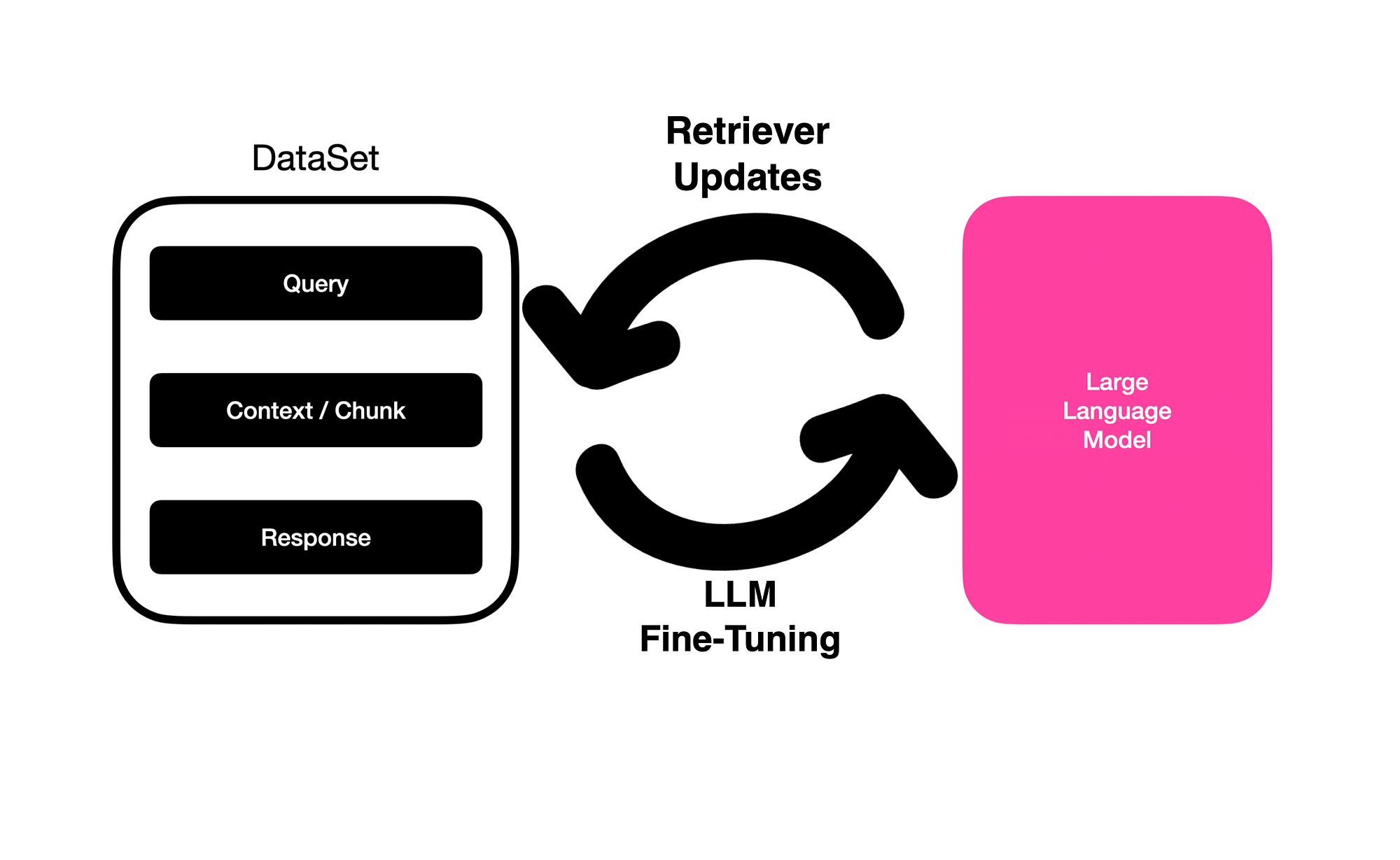

This approach is a novel implementation of RAG called RA-DIT (Retrieval Augmented Dual Instruction Tuning) where the RAG dataset (query, context retrieved and response) is used to to fine-tune a LLM…

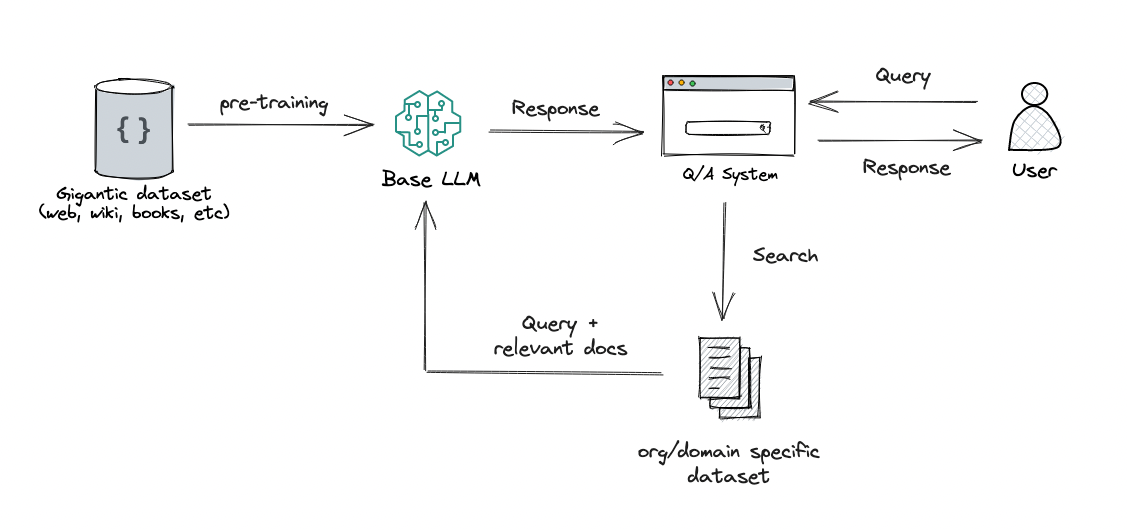

Leveraging LLMs on your domain-specific knowledge base, by Michiel De Koninck

Specializing LLMs for Domains: RAG 🧵vs. Fine-Tuning ⚡, by Peter Chung, Feb, 2024

List: RAG methods, Curated by Pradeep Mohan

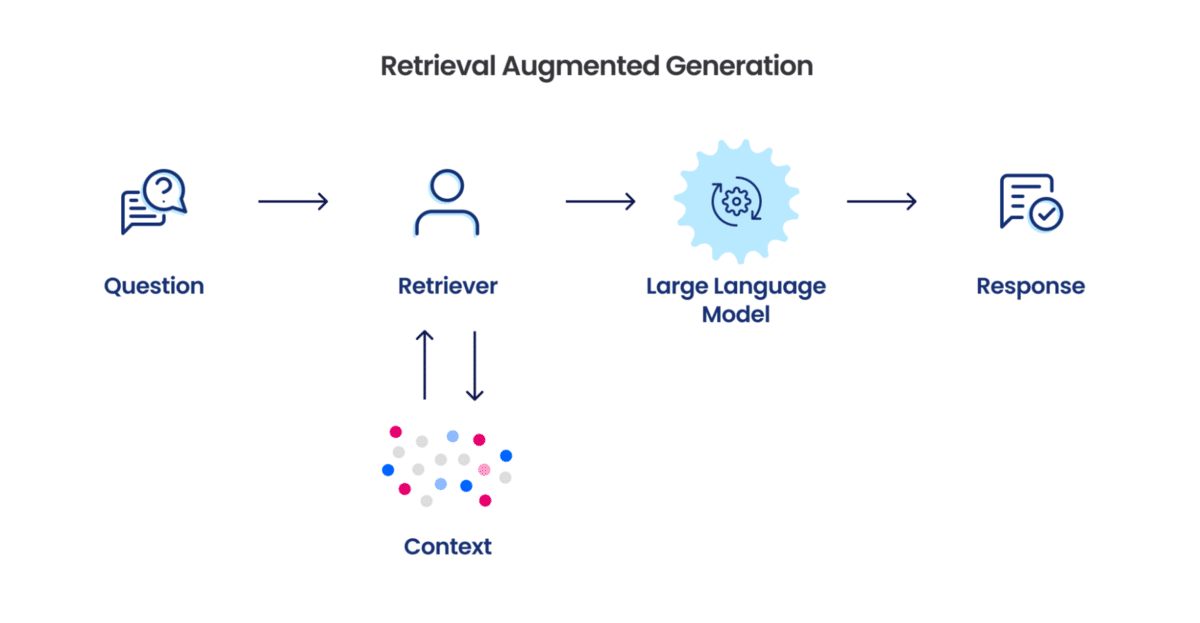

Retrieval Augmented Generation (RAG) - SageMaker

Retrieval-augmented generation. A working example for adding private…, by Mike Shwe

Knowledge Zone Topic Explorer

RAG vs Finetuning — Which Is the Best Tool to Boost Your LLM Application?, by Heiko Hotz

When Do You Use Fine-Tuning Vs. Retrieval Augmented Generation (RAG)? (Guest: Harpreet Sahota)

Fine-Tuning vs. Retrieval Augmented Generation in Large Language Models, by Nishad Ahamed

NEFTune”: Discover How Noisy Embeddings Act as Catalyst to Improve Instruction Finetuning!, by AI TutorMaster

List: General ML & AI, Curated by Fabio Lazzarini

Which is better, retrieval augmentation (RAG) or fine-tuning? Both.

Leveraging LLMs on your domain-specific knowledge base, by Michiel De Koninck

RAG VS FINE-TUNING. There are two common ways in which…, by Nitin Kushwaha