Frontiers Ps and Qs: Quantization-Aware Pruning for Efficient Low Latency Neural Network Inference

Visualization of the loss surface as a function of quantization ranges

Pruning and quantization for deep neural network acceleration: A survey - ScienceDirect

PDF] Channel-wise Hessian Aware trace-Weighted Quantization of Neural Networks

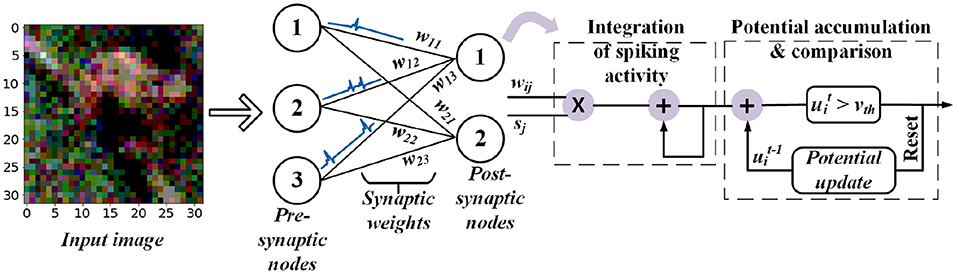

Frontiers Quantization Framework for Fast Spiking Neural Networks

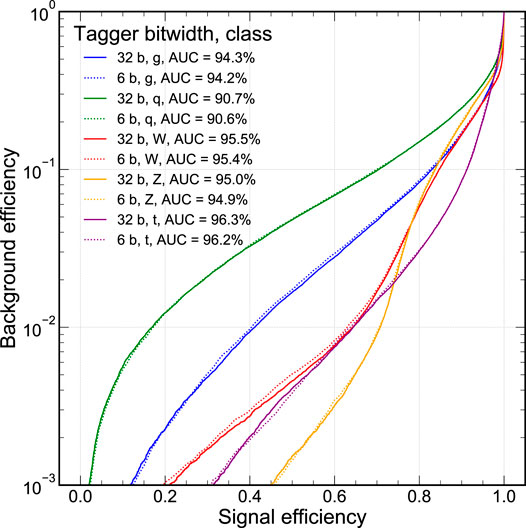

Automatic heterogeneous quantization of deep neural networks for low-latency inference on the edge for particle detectors

PDF] Bayesian Bits: Unifying Quantization and Pruning

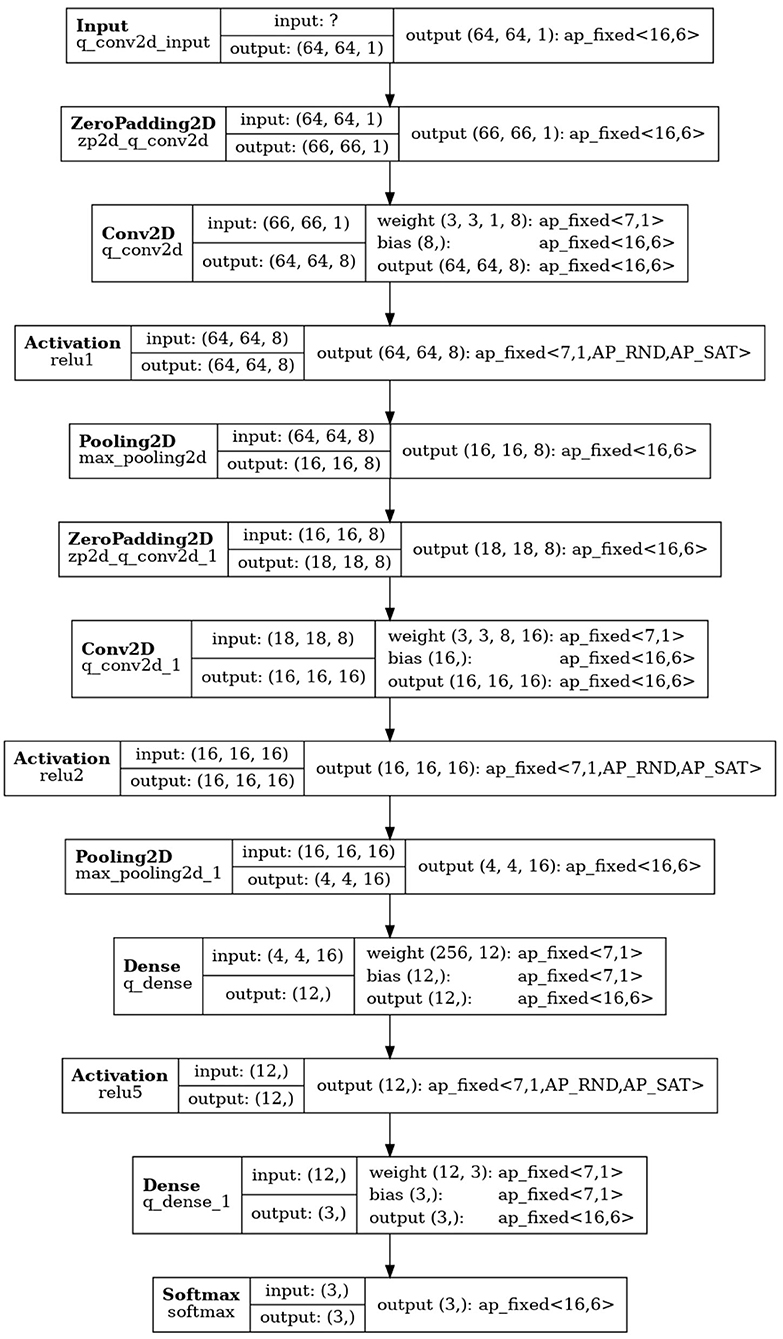

Chips, Free Full-Text

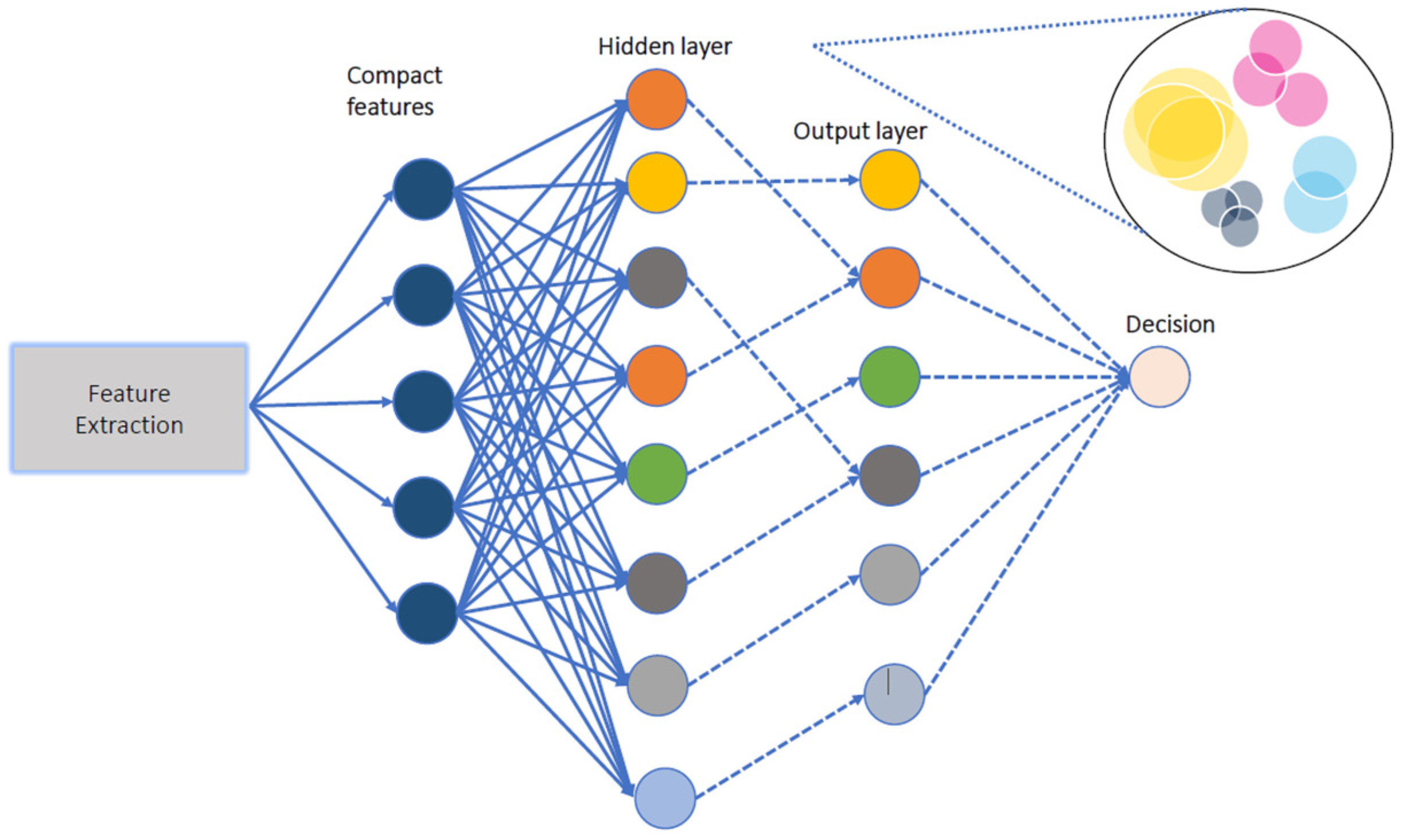

Frontiers ACE-SNN: Algorithm-Hardware Co-design of Energy-Efficient & Low- Latency Deep Spiking Neural Networks for 3D Image Recognition

Pruning and quantization for deep neural network acceleration: A survey - ScienceDirect

Frontiers Real-Time Inference With 2D Convolutional Neural Networks on Field Programmable Gate Arrays for High-Rate Particle Imaging Detectors

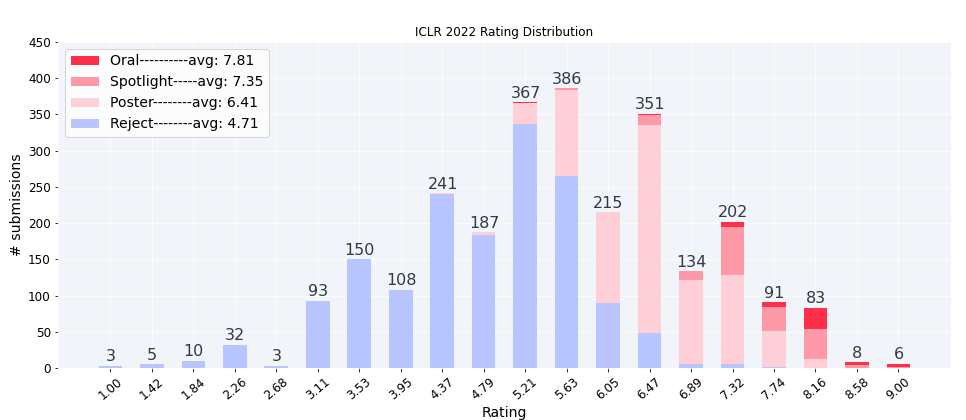

ICLR2022 Statistics

Sensors, Free Full-Text

Enabling Power-Efficient AI Through Quantization