Dataset too large to import. How can I import certain amount of rows every x hours? - Question & Answer - QuickSight Community

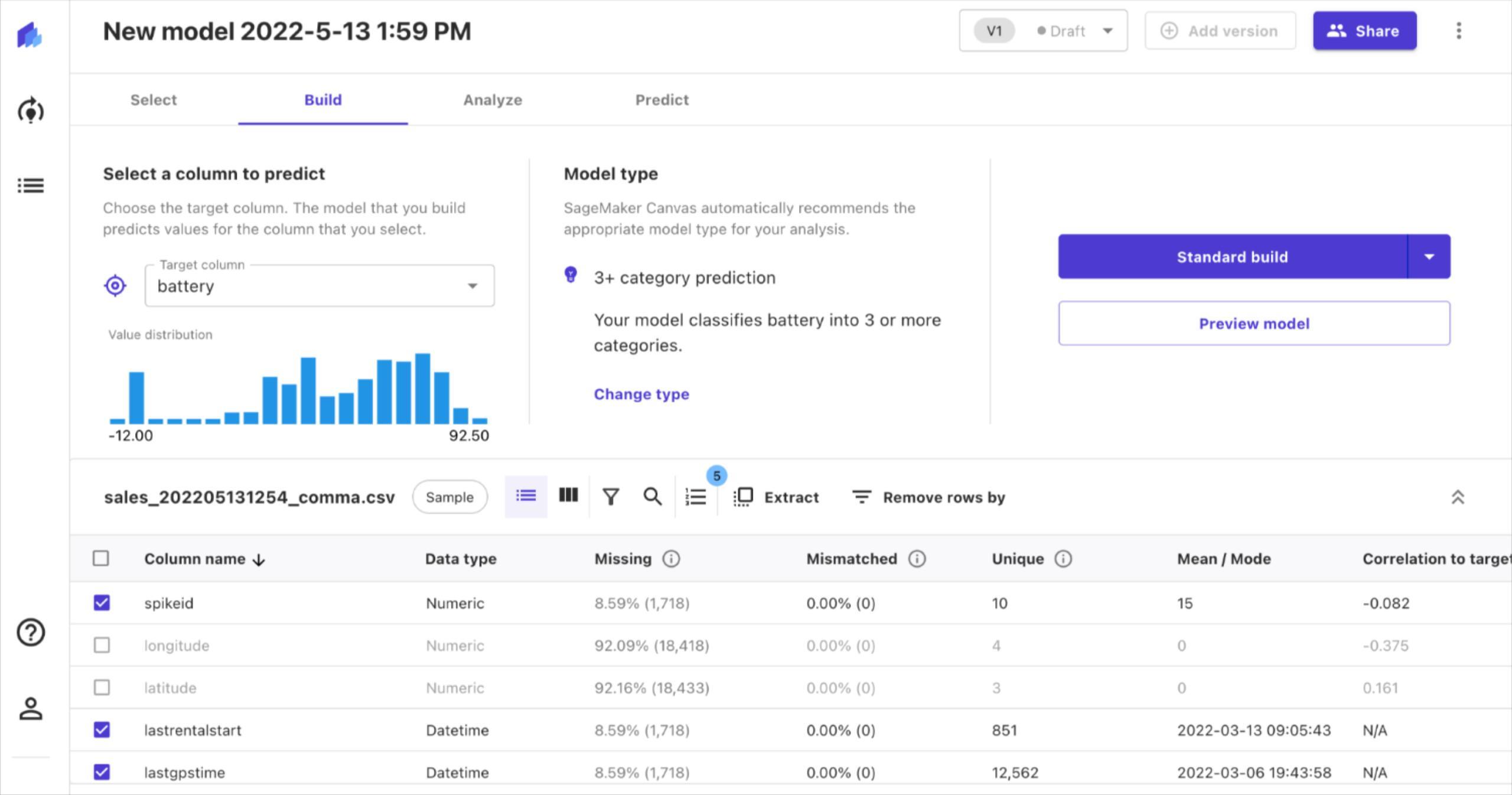

Im trying to load data from a redshift cluster but the import fails because the dataset is too large to be imported using SPICE. (Figure 1) How can I import…for example…300k rows every hour so that I can slowly build up the dataset to the full dataset? Maybe doing an incremental refresh is the solution? The problem is I don’t understand what the “Window size” configuration means. Do i put 300000 in this field (Figure 2)?

Solved: Delete all the row when there is null in one colum - Microsoft Fabric Community

75 Free, Open Source and Top Reporting Software in 2024 - Reviews, Features, Pricing, Comparison - PAT RESEARCH: B2B Reviews, Buying Guides & Best Practices

Dataset too large to import. How can I import certain amount of rows every x hours? - Question & Answer - QuickSight Community

mysql - A query is taking 9 hours to import 1.900.000 rows from Database A to Quicksight - Stack Overflow

AWS Archives - Solita Data

QuickSight

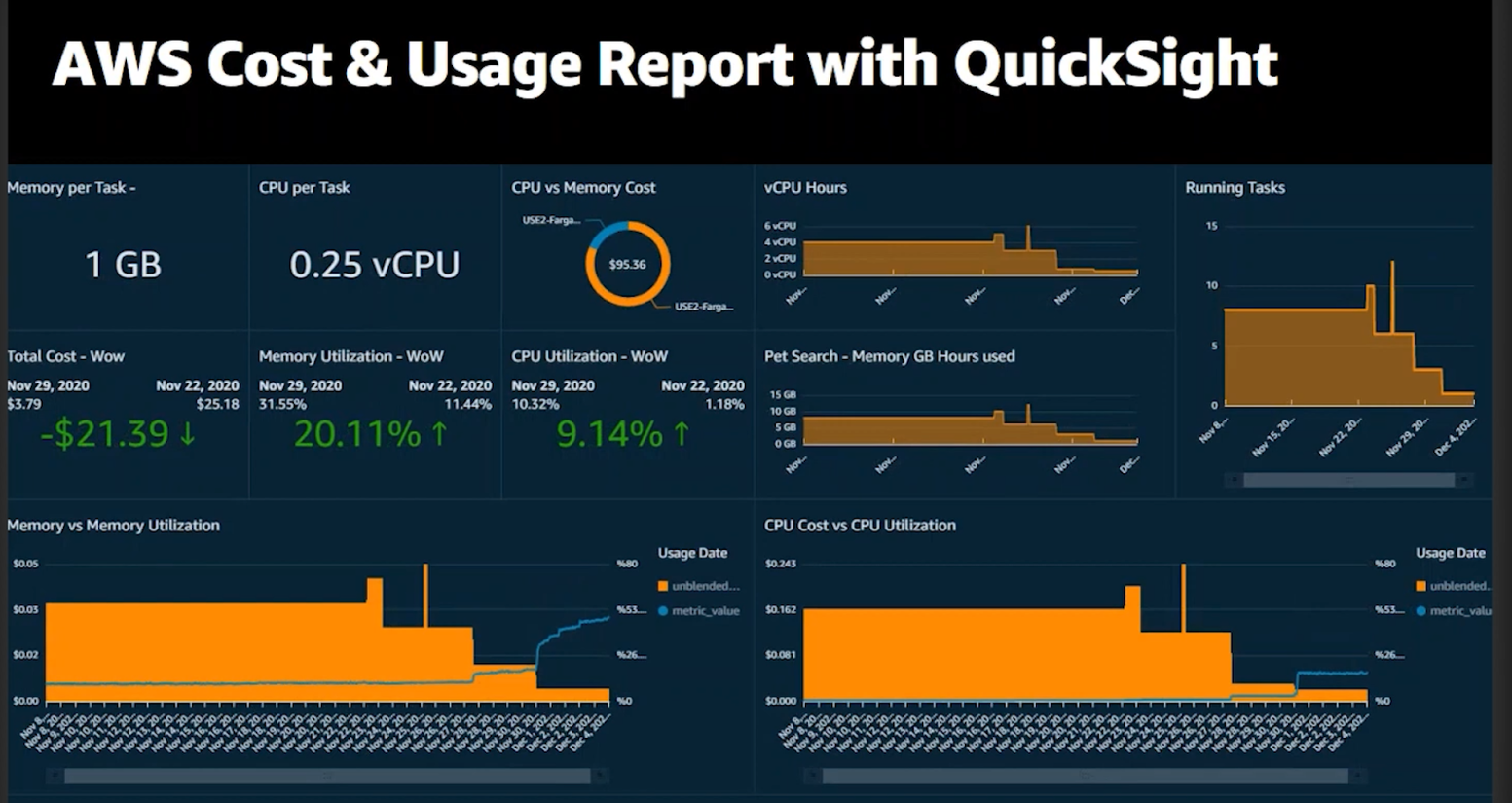

/wp-content/uploads/2022/04/AWSCostAndU

QuickSight Now Generally Available – Fast & Easy to Use Business Analytics for Big Data

AWS DAS-C01 Practice Exam Questions - Tutorials Dojo

Power BI Interview Questions and Answers for 2024

Quicksight User, PDF, Web Services

Easy Analytics on AWS with Redshift, QuickSight, and Machine Learning, AWS Public Sector Summit 2016

PDF) Big Data Analytics Empowered with Artificial Intelligence