Prompt Compression: Enhancing Inference and Efficiency with LLMLingua - Goglides Dev 🌱

Let's start with a fundamental concept and then dive deep into the project: What is Prompt Tagged with promptcompression, llmlingua, rag, llamaindex.

Goglides Dev 🌱 - All posts

Goglides Dev 🌱

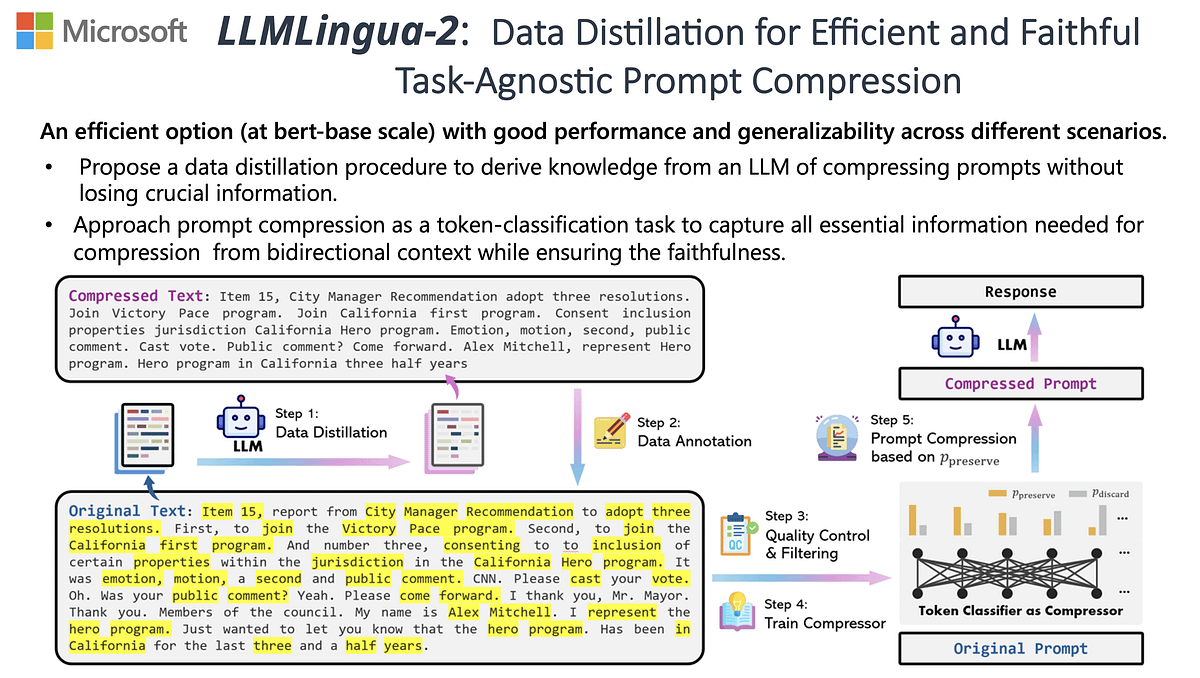

LLMLingua: Innovating LLM efficiency with prompt compression - Microsoft Research

LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models - ACL Anthology

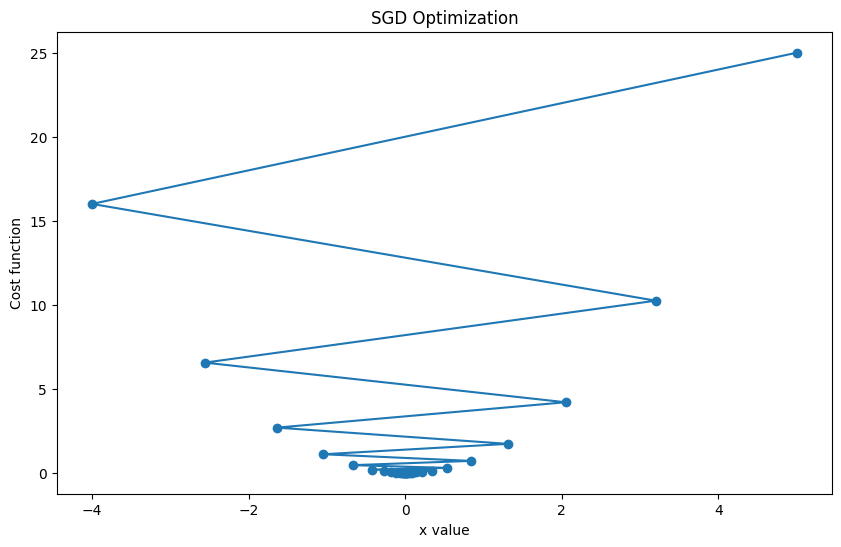

Deep Dive - Stochastic Gradient Descent (SGD) Optimizer - Goglides Dev 🌱

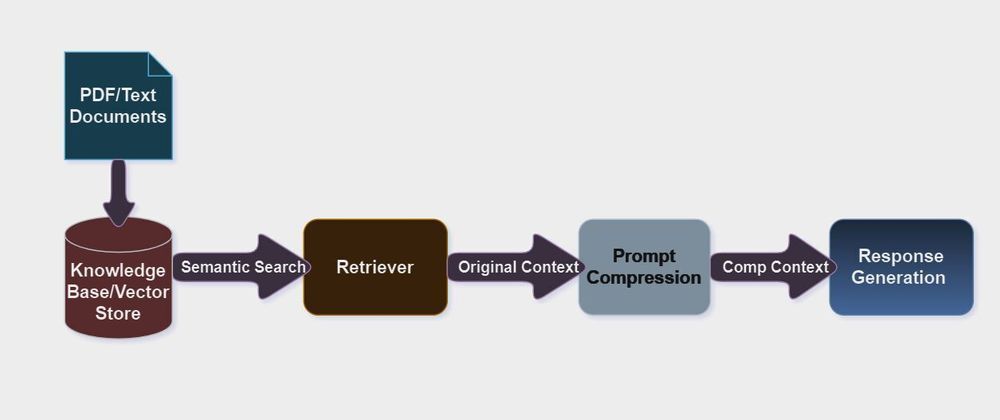

LLMLingua: Revolutionizing LLM Inference Performance through 20X Prompt Compression

Reduce Latency of Azure OpenAI GPT Models through Prompt Compression Technique, by Manoranjan Rajguru, Mar, 2024

Goglides Dev 🌱 - Latest posts

LLMLingua: Innovating LLM efficiency with prompt compression - Microsoft Research

LLMLingua: Innovating LLM efficiency with prompt compression - Microsoft Research